One of the best ways to debug Redshift is to utilize their system tables that Amazon provides. Amazon has documented best practices for analyzing and improving queries.

There are a lot of great ways to tune and improve query performance, and one of the quickest and easiest ways is to check your query queue. When you run queries against your Redshift data source, the queries from Chartio are routed to query queues in Redshift. Each query queue contains a certain number of slots and each queue is allocated a portion of available memory. You can configure all of this in your WLM properties to determine memory allocation among the query slots and how queries can be routed to specific queues and handled. You can run queries using Redshift’s system tables to see the performance of your query queues and determine if your queue needs to be optimized.

These queries can run to get quick insight on your Redshift query queues. They utilize Chartio’s calendar variable to limit the date range of the query. You can replace the variables with date values but keep in mind that excluding a date range will result in a large volume of data being returned.

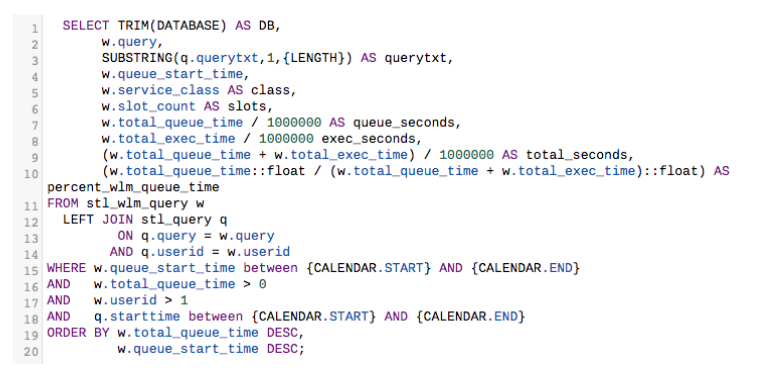

The following query shows how long recent queries waited for an open slot in a query queue before being executed by query:

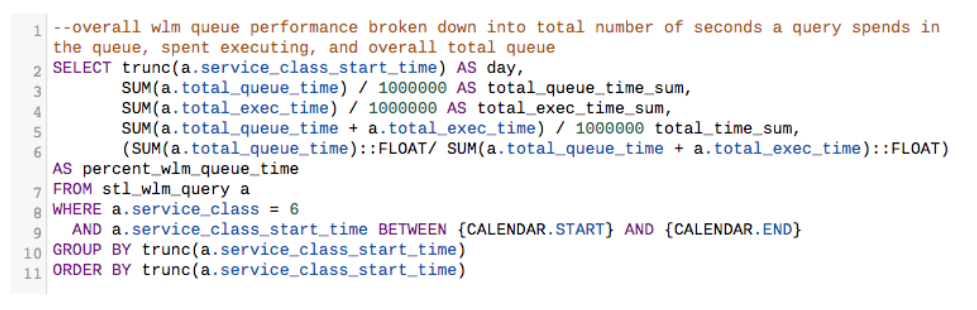

If you would like to break this information down on a summary level, use the following query:

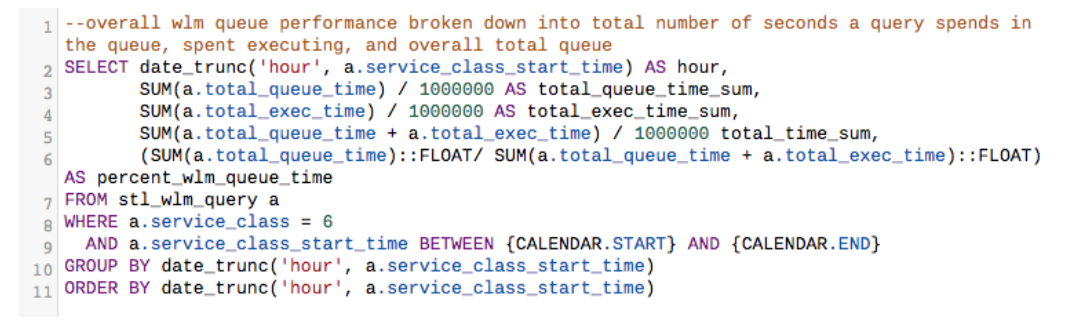

Break this down even further by hour:

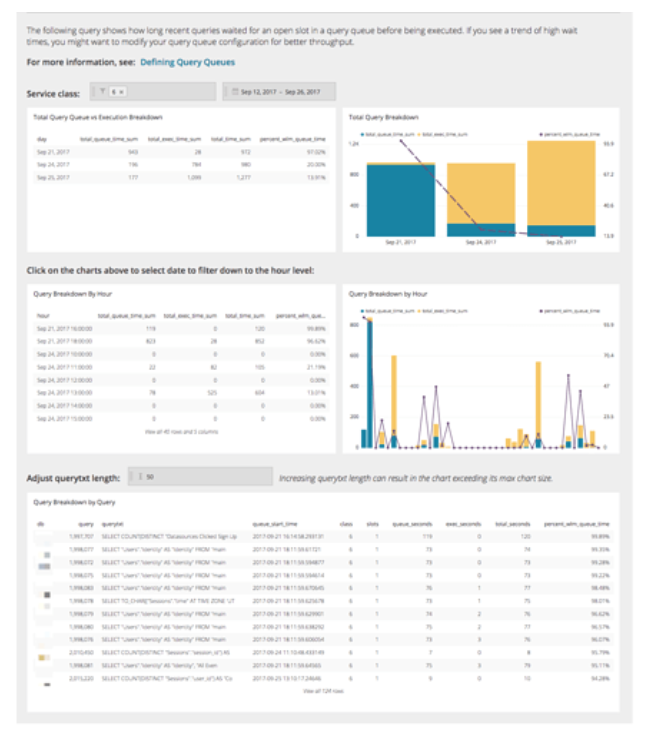

Put all those together, and you can make a dashboard like the one below:

Using the queries above, we can see that the majority of my queries using this particular Redshift data source spend more time executing than in the query queue, which is exactly what we want. But if there is a large volume of queries, those queries spend more time in the queue which indicates that we might want to adjust the concurrency level or query groups.

In Redshift’s WLM configuration, you can have a total of eight user queues (by default you get two) and you can configure the following:

- Concurrency level

- User groups

- Query groups

- WLM memory percent to use

- WLM timeout

- Query Monitoring rules

Depending on your queue performance, you might want to adjust your WLM configuration to avoid query errors and database load. For more information on how each configuration can be used to optimize your query performance, see this article.

Query queues are just one way to optimize and improve query performance. For more information on how to optimize Amazon Redshift performance, see this article that one of Chartio’s partners, Blendo, wrote.