How Amazon Redshift and Google BigQuery Handle Provisioning

Posted by on November 29, 2016 Data Governance

[Editor’s Note: This is our third installment in our “Data Warehouse Blog Series.” In our previous installment, we analyzed how Amazon Redshift and Google BigQuery handle concurrency limitations. Click here to read our previous post What to Consider When Choosing Between Amazon Redshift and Google BigQuery.]

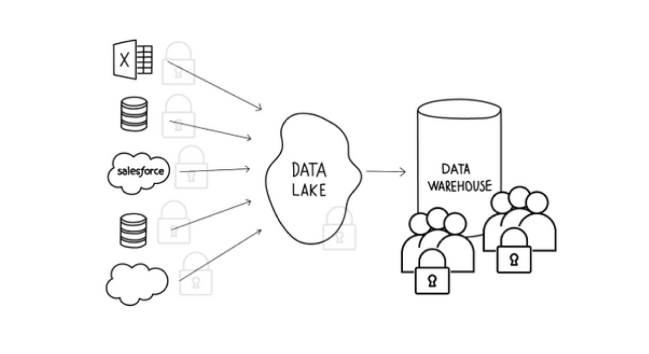

Data warehouses are architected to handle a large volume of data. In fact, many companies use data warehouses to store historical data going back at least three years—and this is a great practice when it comes to enriching information for a target persona or running product usage analysis.

As the overall volume of data grows, it’s not uncommon for a data warehouse to perform operational checks. Today, we’re analyzing data warehouse operations in four separate parts: provisioning, loading, maintenance and security. Stay tuned as we continue to publish our full analysis on data warehouse operations between Amazon Redshift and Google BigQuery.

Amazon Redshift

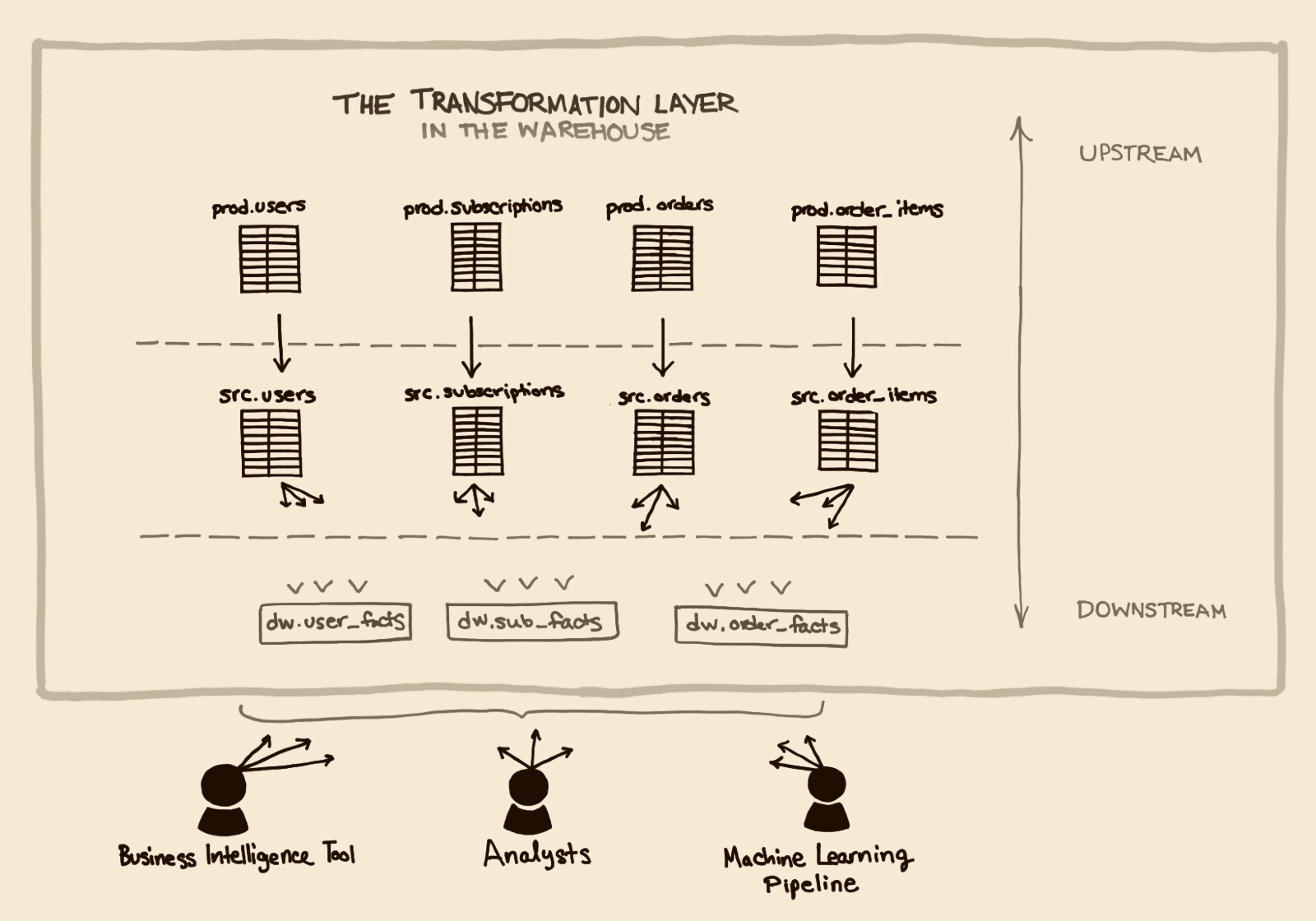

According to its documentation, “an Amazon Redshift data warehouse is a collection of computing resources called nodes, which are organized into a group called a cluster. Each cluster runs an Amazon Redshift engine and contains one or more databases.”

In architecting your Amazon Redshift, you must first provision a cluster made up of one or more nodes, which is handled seamlessly by the table distribution styles. Currently, Amazon offers four types of nodes when setting up your Amazon Redshift, you’ll need to launch a cluster and Amazon automates the rest of the process. The number of nodes that you choose is based on the size of your dataset and query performance. Many Amazon Redshift users see cluster provisioning and choosing node types as a means of greater flexibility, allowing them to use different node types for different needs for optimal performance.

Because Amazon Redshift is architected to meet the needs of its users, Amazon provides an elasticity option of adding and subtracting nodes when necessary. Amazon users may want to resize a cluster when they have more data or they may want to size down their cluster if their business needs and data changes. Luckily, since Amazon automates the entire resizing process, it only takes a few minutes to complete the process.

Although a cluster is only available as read-only when resizing, Amazon allows users to run a parallel environment of the same data and continue to run analytics on the replicated cluster without impacting the production cluster or ongoing workload.

Google BigQuery

For Google BigQuery, configuring clusters and nodes is not written in their architecture. Rather, data is provisioned into tables and queries are run from those tables directly.

According to Google’s blog post, “We have customers currently running queries that scan multiple petabytes of data or tens of trillions of rows using a simple SQL query, without ever having to worry about system provisioning, maintenance, fault-tolerance or performance tuning.”

As of September 2016, Google recently released a flat-rate pricing model giving high-volume, enterprise customers a more stable monthly cost for queries rather than an on-demand model of data processed. The flat-rate pricing starts at a monthly cost of $40,000 with an unlimited amount of queries. It’s worth noting that with this pricing model, storage is priced separately.

For many smaller companies, forking over $40,000 per month for unlimited queries may not be an option. While provisioning data may seem easier in Google BigQuery, it’s at the cost of limited predictability in monthly pricing and holding a quota threshold to manage a cost effective system.

Conclusion

If your company is willing to build a data warehouse infrastructure upfront and are able to proactively think about data organization, then Amazon Redshift is the clear choice. With various types of nodes available with huge storage capacities, Amazon Redshift is a robust system that is used by enterprise-grade and scaling startups.

Google BigQuery has taken an entirely different approach to provisioning by forgoing organization because they’re able to have an order of magnitude of hardware that is unlike their competitors. This architecture may be appealing to companies that want to dive into the deep end first and sacrifice cost, future data migration and infrastructure. While this may seem alluring to companies, the cost factor should not be ignored for lean companies.

Choosing between the two systems may come down to deciding whether you have a preference and the ability to provision data upfront or forgo the entire process altogether.

In our next installment, we’ll analyze the differences in data loading between Amazon Redshift and Google BigQuery. For immediate action in choosing a data warehouses, download our white paper, What to Consider When Choosing Between Amazon Redshift and Google BigQuery now.